Fairness is the foundation of any promotional game or drawing. Whether a player spins a wheel, flips a card, or waits to hear their name called at a drawing event, they're trusting that the outcome is genuinely random — not influenced, not predictable, and not repeatable. That trust is earned through the quality of the random number generation behind the system.

This article explains exactly how randomness works inside Promo on Demand — both in our games and in our drawing tool — as well as the broader science of what makes an RNG trustworthy, what makes one fail, and how you can see the principles in action for yourself with an interactive simulation at the bottom of this page.

What Is a Random Number Generator?

A Random Number Generator (RNG) is any process or mechanism that produces a value — or a sequence of values — that cannot be predicted in advance. The key word is unpredictability. A sequence that looks random but can be reproduced if you know the starting conditions doesn't meet the bar for true randomness, at least not for security-sensitive applications.

In practice, RNGs fall into three broad families, each with different characteristics, strengths, and appropriate use cases:

Harvests randomness from physical phenomena — atmospheric noise, thermal fluctuations, radioactive decay, or quantum events. Genuinely unpredictable because it draws from the real world. Used in hardware security modules and high-stakes cryptographic key generation.

Uses a deterministic algorithm seeded with an initial value to produce a sequence that appears random and passes statistical tests. Fast, efficient, and statistically excellent for simulations and games. The quality varies enormously between implementations.

A PRNG that meets additional standards, making it resistant to being reverse-engineered even if an attacker knows previous outputs. Required for passwords, tokens, and encryption keys. Examples include crypto.getRandomValues() in modern browsers.

How a PRNG Works — and What Makes It Trustworthy

Every PRNG starts with a seed — an initial numeric value that is fed into a mathematical formula. The formula transforms the seed into an output number, then feeds that output back in as the new seed. Repeat this billions of times and you have a stream of numbers that have no obvious pattern, even though every single one was mathematically derived from the first.

Two properties determine whether a PRNG is trustworthy:

- Period length — Every PRNG will eventually repeat its sequence (because it's deterministic and finite). A high-quality generator has an astronomically long period before any repeat occurs. The Mersenne Twister, for example, has a period of 219,937 − 1. You would exhaust the universe before cycling through it.

- Statistical uniformity — The output must pass rigorous tests confirming that every value is equally likely to appear, that there are no correlations between consecutive numbers, and that no patterns emerge across any dimension. The gold-standard test suites include the NIST SP 800-22 battery, the Diehard tests, and the TestU01 library — each comprising dozens of independent statistical challenges.

A PRNG that passes these tests produces output that is, for all practical purposes, indistinguishable from true randomness in any application where the seed itself is not known to the observer.

When RNGs Go Wrong

Not all generators are created equal. The history of computing is littered with RNG implementations that appeared to work fine but harbored hidden patterns — patterns that only became visible under statistical analysis or through specific attack vectors. Here are three well-known failures, and why they matter:

One of the most infamous failures in computing history. RANDU was a widely distributed random number library that produced numbers arranged in just 15 distinct planes in three-dimensional space — meaning any set of three consecutive RANDU outputs was predictable. Monte Carlo simulations run using RANDU produced subtly wrong results for decades before the flaw was discovered. Published scientific papers using it had to be re-examined.

LCGs use the formula seed = (a × seed + c) % m and can work well when the constants are chosen carefully. Poorly chosen constants produce outputs with strong serial correlations — sequential numbers that cluster in predictable ways. Early video game RNGs frequently used LCGs with bad constants, making in-game randomness exploitable by players who learned the patterns.

Even a mathematically perfect PRNG becomes predictable if its seed is weak. Early web applications sometimes seeded RNGs using the current timestamp in milliseconds — a value that an attacker who knew approximately when the seed was set could reconstruct with only a few thousand guesses. The randomness of the output is only as strong as the entropy of the seed.

We do not use any of these approaches in Promo on Demand. Our games and drawing tool rely on the RNG implementations built into modern browser engines — which have been through decades of scrutiny, public testing, and continuous improvement by some of the largest engineering organizations in the world.

RNG in Our Games

Every P.O.D. game runs entirely in a modern web browser — Chrome, Edge, Safari, or Firefox. These browsers implement JavaScript's Math.random() function using a state-of-the-art algorithm called xorshift128+ (used in V8, the engine powering Chrome and Edge) or equivalent high-quality generators in other engines.

Xorshift128+ maintains 128 bits of internal state, has an enormous period before any sequence repeats, and passes the full TestU01 Big Crush battery — the most demanding statistical test suite in existence. It is seeded automatically by the browser using high-entropy system sources at startup, meaning the seed itself is unpredictable.

Within each game, randomness is applied at two distinct levels:

Outcome Selection Probability-weighted draw

When a player triggers a game, the system builds an array of possible outcomes weighted by the odds you configured in the customization panel. If Prize A has 30% odds and Prize B has 70% odds, the array is proportionally populated with 30 instances of Prize A and 70 of Prize B (scaled to 100 total). A single call to Math.random() selects a random index from that array. Because each index is equally likely to be chosen — and the array is proportionally populated — the configured odds are honored precisely over a sufficient number of plays. There is no fixed cycle, no pattern, and no memory of previous outcomes.

Position & Layout Shuffling Fisher-Yates algorithm

Games that reveal items in a grid or sequence — such as card-based games and spot-reveal formats — use the Fisher-Yates shuffle to randomize the position of elements before each play. Fisher-Yates is the mathematically proven algorithm for producing a uniformly random permutation of any list: every possible arrangement is equally likely. Each element is swapped with a randomly chosen element from the remaining unshuffled positions, guaranteeing no arrangement is favored over another. It is the standard algorithm used wherever unbiased shuffling is required.

RNG in Our Drawing Tool

The drawing tool operates on a pool of eligible participants — your uploaded player list. When a draw is initiated, the system performs a random selection from the active, undrawn entries in the pool. Previously drawn participants are excluded from subsequent draws within the same session, ensuring no player can be selected twice.

The Lottery Tool's visual ping-ball animation is exactly that — a visual representation. The winner has already been determined by the RNG before the first ball starts moving. The animation builds anticipation and creates an engaging floor experience, but it has no bearing on the outcome. The selection mechanism operates independently and impartially before the display begins.

The audit trail records the exact timestamp of every draw, the winner selected, and the staff member who initiated it — providing a complete, verifiable chain of custody for every promotional event you run.

How Randomness Is Validated: Statistical Testing

Claiming that an RNG is random is not enough — it has to be demonstrated. The way this is done in practice is through standardized statistical test suites that probe for every known type of non-randomness. The three most respected are:

- NIST SP 800-22 — Published by the U.S. National Institute of Standards and Technology. A battery of 15 tests covering frequency analysis, runs, longest runs, spectral analysis, and more. Required for cryptographic applications by U.S. federal standards.

- Diehard Tests — Developed by statistician George Marsaglia, this suite subjects a generator to 18 increasingly demanding tests for uniformity and independence. A generator that fails even one Diehard test has a measurable bias.

- TestU01 Big Crush — The most comprehensive test suite available, containing 106 statistical tests. Failing even a single test in Big Crush is considered a serious deficiency. The xorshift128+ algorithm used in Chrome and Edge passes the entire suite.

The RANDU generator mentioned earlier fails the Diehard tests spectacularly — which is how its fundamental flaw was formally identified long after it had already been distributed and used widely.

See It For Yourself: Live Monte Carlo Simulation

A Monte Carlo simulation runs a random process hundreds or thousands of times and observes how the outcomes distribute. It's one of the primary tools statisticians use to verify that a random process is actually behaving randomly. The name comes from the Monte Carlo Casino in Monaco — an early inspiration for probabilistic modeling.

The simulation below uses JavaScript's Math.random() — the same RNG powering every P.O.D. game — to flip a virtual coin 500 times and track how the cumulative percentage of heads evolves over those flips. In a fair coin flip, the expected outcome is exactly 50% heads. Watch what happens:

Notice a few things as you run this repeatedly:

- Early volatility. With only 10 or 20 flips, the cumulative percentage can swing wildly — 70% heads, 30% tails, anything is possible. This is normal and expected with a small sample.

- Convergence over time. As flips accumulate past 100, 200, 300, the line settles closer and closer to the 50% reference. By flip 500, virtually every run ends within a few percentage points of the expected outcome.

- No two runs are identical. Each run produces a distinct path toward convergence. There is no fixed pattern, no cycle, no memory of the previous run. This is exactly what a well-functioning PRNG should do.

Sample Size and the Law of Large Numbers

The convergence you just observed has a formal name: the Law of Large Numbers. Stated plainly: as the number of independent trials of a random process increases, the observed average outcome will converge toward the theoretical expected value.

This is not a quirk or a coincidence — it is a mathematical theorem, first proven rigorously by Jacob Bernoulli in 1713. It has profound practical implications for anyone running promotional games:

- Short promotions will deviate from the expected odds. If you run a game with a 10% jackpot probability 20 times, you might land 0 jackpots or 4 jackpots — both outcomes are statistically reasonable. The 10% probability only manifests reliably over hundreds of plays.

- Longer promotions produce more predictable budgets. The more plays your promotion generates, the closer your actual prize distribution will track your configured odds. A 500-play event will produce results much closer to your theoretical budget than a 50-play event.

- Statistical significance requires a sufficient sample size. A simulation must run enough iterations to allow for convergence. Rule of thumb: at least 100 plays per prize tier for meaningful budget predictability, with 300+ plays producing strong statistical alignment.

The Law of Large Numbers in practice: If your highest-value prize has 1% odds, you should expect approximately 1 winner per 100 plays — but any individual 100-play event could produce 0 or 3 winners. Over 1,000 plays, you'll see very close to 10 winners. Plan prize budgets for the long run, and carry a contingency for short-run events.

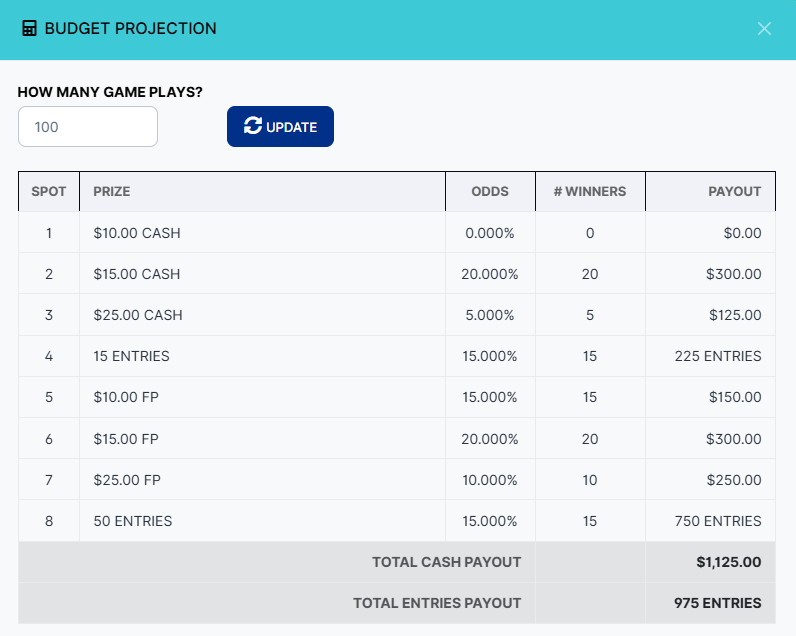

Budget Projection: Your Pre-Flight Check

Because the Law of Large Numbers takes time to work, we've built a practical tool directly into the game customization page: the Budget Projection. Before you save and launch any promotion, you can open this tool to see a forward-looking estimate of how your configured odds will translate into winners and total payout across a given number of plays.

Enter the number of plays you expect to run and click Update. The tool calculates:

- Expected winners per prize tier — Based on your configured odds multiplied by the number of plays.

- Total cash payout — The sum of all monetary prizes at the projected winner counts.

- Total entries payout — The sum of all drawing entry prizes, giving you a sense of downstream drawing pool sizes.

Try different play counts — 50, 200, 500 — to understand how your budget scales. This is the recommended step before every new promotion: confirm the projected payout is within your budget before a single player takes a turn.

In Summary: How We Approach Fairness

Fairness in a promotional game isn't just an ethical obligation — it's the only way the experience works at all. Players who suspect an outcome is influenced or rigged disengage immediately. The credibility of every promotion you run depends on the integrity of the randomness behind it.

Here is how Promo on Demand approaches that responsibility:

- We use industry-standard, statistically verified RNG. Every game runs in a modern browser engine whose

Math.random()implementation uses xorshift128+ — an algorithm that passes the most demanding test suites in existence, including TestU01 Big Crush. - We use the Fisher-Yates shuffle for position randomization. The mathematically correct, unbiased algorithm for permutation — not a naive swap or sort-based approach that introduces subtle ordering biases.

- We use probability-weighted outcome arrays for prize selection. The odds you configure are faithfully encoded into the selection mechanism. There is no adjustment, no house tilt, and no memory of previous outcomes.

- We use the same RNG engine for drawings. The player pool selection draws from the same trusted PRNG, with excluded tracking to prevent duplicate winners.

- We do not use weak, legacy, or discredited RNG methods. No RANDU, no naive LCGs, no timestamp-seeded generators.

- We provide a Budget Projection tool. So you can verify the statistical implications of your configured odds before going live — applying the Law of Large Numbers to your specific promotion.

- Every outcome is logged with a full audit trail. Timestamp, result, and user — for every play and every drawing — giving you a permanent, reviewable record of every promotional event.

If you ever have questions about the randomness or fairness of a specific result, the audit trail is your first resource. Every outcome was generated by the same mechanism described in this article — transparent, testable, and built on three decades of browser engine development. Contact our team if you'd like to discuss any aspect of how randomness is handled in your promotions.